Execute GPU jobs instantly from your terminal with zero setup. No manifests, no environment drift, and per-second billing. Velda eliminates infrastructure overhead, letting you focus entirely on your models.

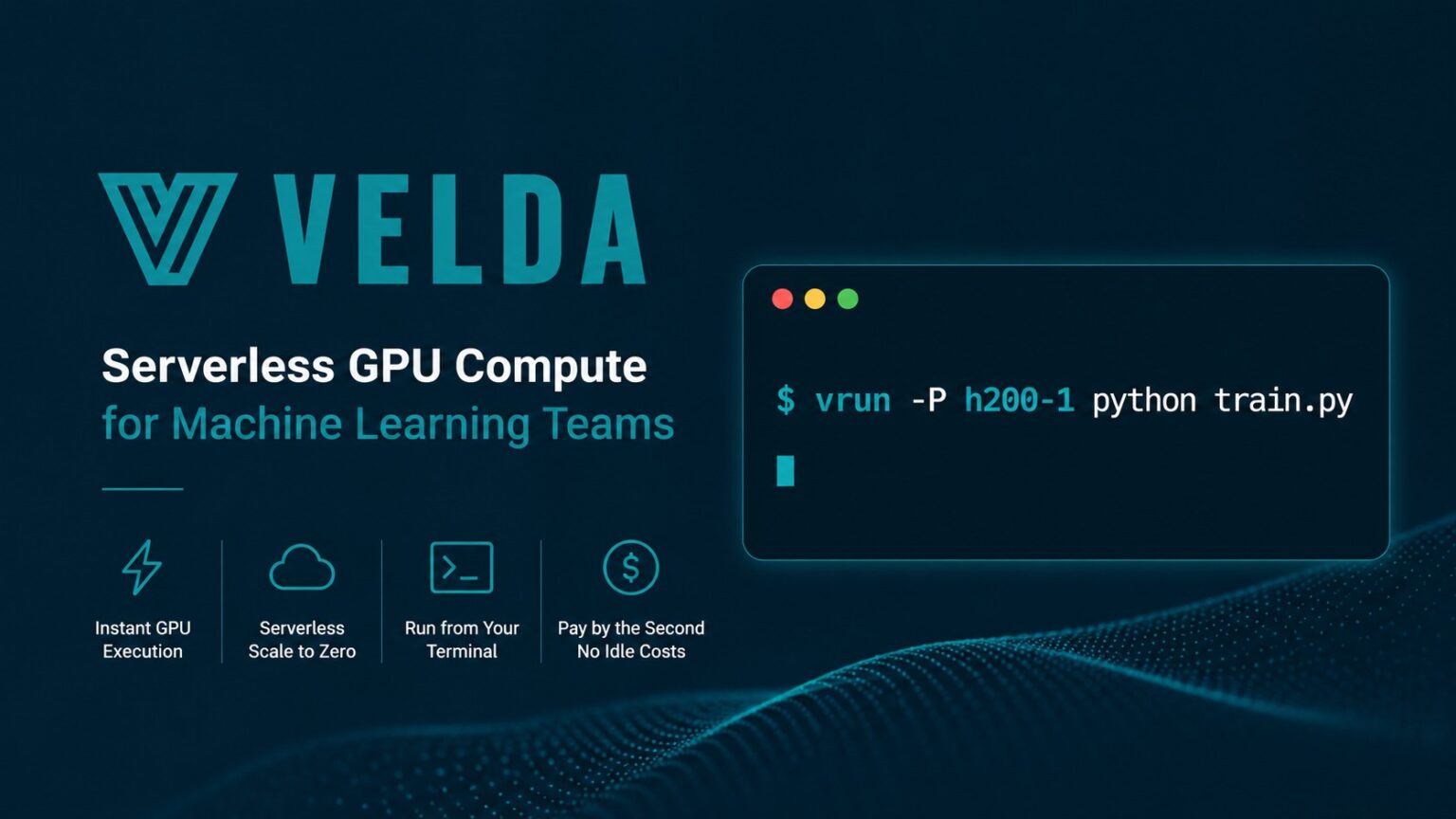

— Velda, Inc. today announced the general availability of its serverless GPU job platform, purpose-built for machine learning teams who frequently perform model training. Unlike container-based serverless GPU platforms such as Modal, RunPod, or AWS SageMaker, Velda’s environment-first architecture allows developers to launch GPU workloads, including LLM fine-tuning, robotics models training, and large-scale batch inference, directly from their development environment with a single command prefix. This removes the container-packaging and cluster-provisioning overhead that has historically separated AI experimentation from production-scale training.

“Infrastructure has become a disproportionate drag on AI velocity,” said Chuan Qiu, Founder & CEO of Velda. “Serverless avoids the hassle of cluster management, but teams are losing days to Docker maintenance and managing environment consistency instead of focusing on their models. Running GPU workloads should not be bundled with the overhead of containers or cluster management.”

The Problem Velda Solves

ML and AI teams training large language models, physical-ai models, and other deep learning workloads have long faced a costly infrastructure trilemma. Until today, teams have been forced to choose between three costly paths:

- Static VMs and reserved GPU instances eliminate setup friction but generate idle costs around the clock, billing teams for compute that sits unused between training runs and experiments.

- Container or annotation based serverless GPU solutions trade cluster overhead for a different kind of tax: proprietary libraries, container image maintenance, and environment drift between local development and remote execution.

- Slurm and Kubernetes impose significant operational complexity: steep learning curves, dedicated infrastructure expertise, and ongoing cluster maintenance that pulls ML engineers away from model development.

Velda eliminates this overhead at the architectural level rather than abstracting around it.

Platform Capabilities

- Environment-First GPU Execution. Velda provisions a hosted development environment from a curated set of templates that behaves identically to a local machine. Scaling any Python script or training job to cloud GPU compute requires only the addition of a command prefix, vrun, with no Docker image build or registry push required. This makes Velda the fastest path from a local ML development environment to a cloud GPU.

- True Serverless GPU Billing. Compute scales to zero between jobs. Teams are billed by the second for active GPU time only, with no reserved instance minimum, no idle charges, and no platform seat fees, making it one of the most cost-efficient serverless GPU platforms for iterative AI training.

- High-Performance GPU Hardware. A100 (80GB), H100, H200, and limited B200 GPUs are available on demand without reservation, covering the full spectrum from cost-efficient training to frontier model workloads.

Availability and Pricing

Velda’s serverless GPU platform charges by the second for active GPU compute with no additional subscription fees. The open-source and enterprise edition is available for self-hosting.

About Velda

Velda, Inc. is a serverless GPU compute company headquartered in Mountain View, California. The company builds cloud GPU infrastructure for machine learning and AI teams that prioritize development velocity over operational complexity. Velda supports LLM fine-tuning, distributed model training, and large-scale AI batch inference workloads, and offers managed cloud and self-hosting options.

Contact Info:

Name: Chuan Qiu

Email: Send Email

Organization: Velda Inc

Website: https://velda.io/?utm_source=pr1

Release ID: 89191707

If you detect any issues, problems, or errors in this press release content, kindly contact error@releasecontact.com to notify us (it is important to note that this email is the authorized channel for such matters, sending multiple emails to multiple addresses does not necessarily help expedite your request). We will respond and rectify the situation in the next 8 hours.